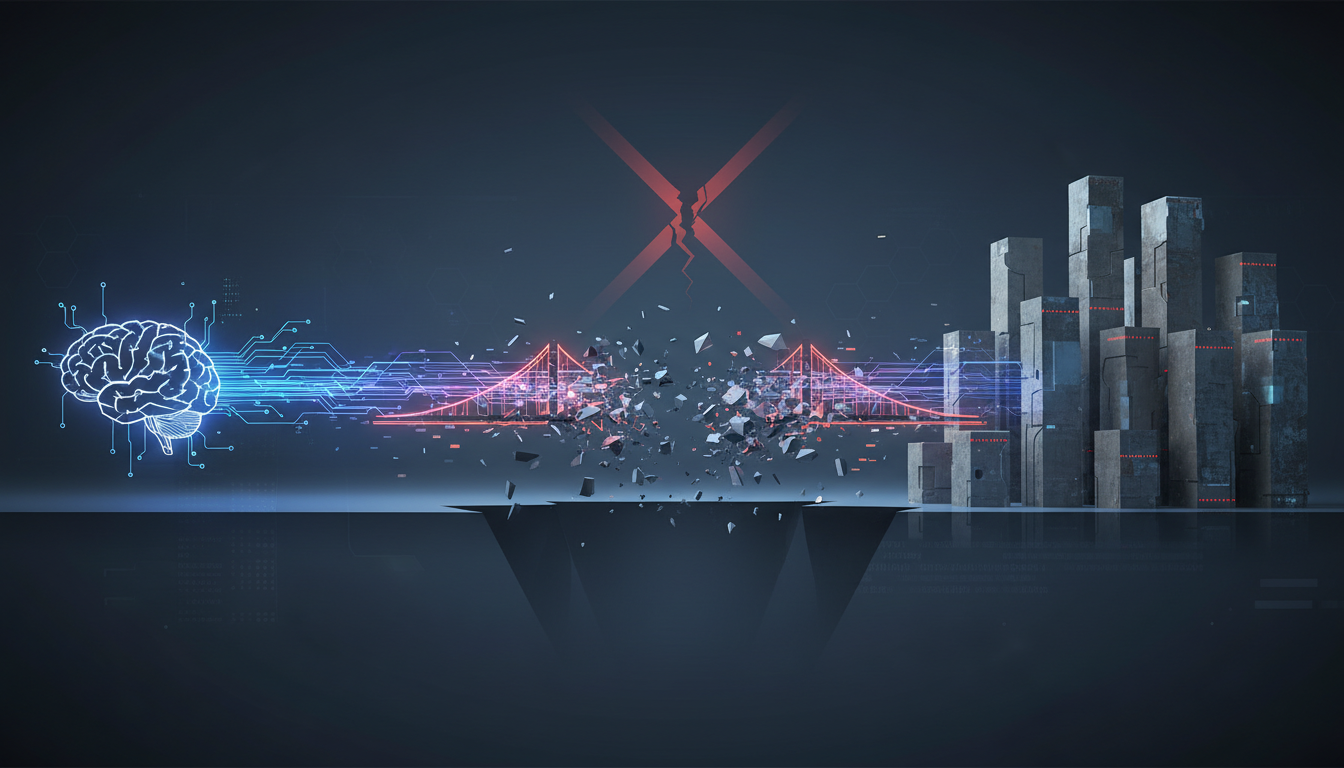

In the evolving landscape of enterprise AI, organizations often find themselves at a crossroads when attempting to integrate their retrieval-augmented generation (RAG) systems with internal data sources. Many companies, especially those in highly regulated industries like healthcare, face significant challenges in bridging the gap between advanced AI capabilities and the complex, siloed nature of their enterprise data. The promise of AI-driven insights is often overshadowed by the harsh reality of integration failures.

The Complex Web of Enterprise Systems

Enterprise RAG systems are far from simple. They require seamless access to a variety of data sources, such as SQL databases, CRM systems like Salesforce, and document repositories like SharePoint. Each source holds critical information that, when combined, can provide comprehensive insights. However, traditional methods of integration, which involve developing custom scripts and connectors for each data source, quickly become unsustainable.

The process of creating these bespoke integrations is fraught with challenges. Each connector must accommodate unique authentication mechanisms, handle data transformation, and ensure error recovery. As a result, what starts as a manageable task can rapidly escalate into an overwhelming burden of maintenance and technical debt. This is not just an issue of inefficiency but a fundamental flaw in how enterprises approach their RAG architectures.

The Hidden Costs of Custom Integrations

The financial and operational implications of custom integrations are profound. Development teams often spend an inordinate amount of time and resources maintaining these fragile connections. According to industry experts, the effort dedicated to integration can consume over 80% of a RAG project's lifecycle. This is time that could be better spent on refining the AI's capabilities and improving user experiences.

Moreover, maintaining compliance with regulatory standards becomes a daunting task. Each custom connector adds layers of complexity that make it difficult to trace data origins and ensure that only authorized users access sensitive information. Compliance audits become slow and expensive, with organizations struggling to provide clear audit trails.

Performance and Accuracy Pitfalls

Custom integrations can significantly impact the performance and accuracy of RAG systems. When connectors are not designed with scalability in mind, they struggle under the demands of real-time queries. This can lead to high latency, incomplete data retrieval, and, ultimately, AI hallucinations—instances where the AI generates incorrect or misleading information due to insufficient context.

Without a standardized approach to data integration, enterprises are left grappling with these performance issues. They find themselves in a cycle of patching problems rather than addressing the root cause of integration failures.

Enter the Model Context Protocol (MCP)

A transformative solution is emerging in the form of the Model Context Protocol (MCP). Unlike traditional integration methods, MCP offers a standardized protocol for connecting enterprise AI systems with diverse data sources. It shifts the paradigm from creating custom connectors to establishing a universal framework that any compliant data source can adopt.

MCP acts as a universal plug, similar to USB-C in the hardware world. It allows AI systems to interact with data sources without requiring intricate, custom-built connectors. By adopting MCP, enterprises can move from a model of integration to orchestration. Data sources host MCP servers that communicate with AI clients using a universal protocol, simplifying the integration process significantly.

MCP servers do more than just fetch data. They enable rich interactions, allowing RAG systems to perform complex tasks like running aggregation queries or generating real-time analytics. This capability transforms the AI from a passive data retriever into an active orchestrator of enterprise workflows.

Furthermore, MCP dramatically reduces the cost and complexity of integration. By eliminating the need for custom connectors, it slashes development timelines and reduces maintenance overhead. Compliance becomes more manageable, as MCP inherently provides transparent audit trails and access controls.

Building a Sustainable RAG Future

To realize the full potential of RAG systems, enterprises must embrace a federated approach to data integration. Business units should own their data interfaces through MCP servers, while AI teams focus on orchestrating intelligence across these sources. This shift not only enhances the performance and accuracy of AI systems but also ensures they remain adaptable to the ever-changing demands of business.

In summary, the future of enterprise RAG lies in moving away from brittle, custom integrations towards a standardized, sustainable architecture. By adopting MCP, organizations can unlock the true potential of their AI systems, turning data silos into a cohesive framework for intelligent decision-making. The bridge to a more efficient and effective RAG system is built—it's time for enterprises to start using it.