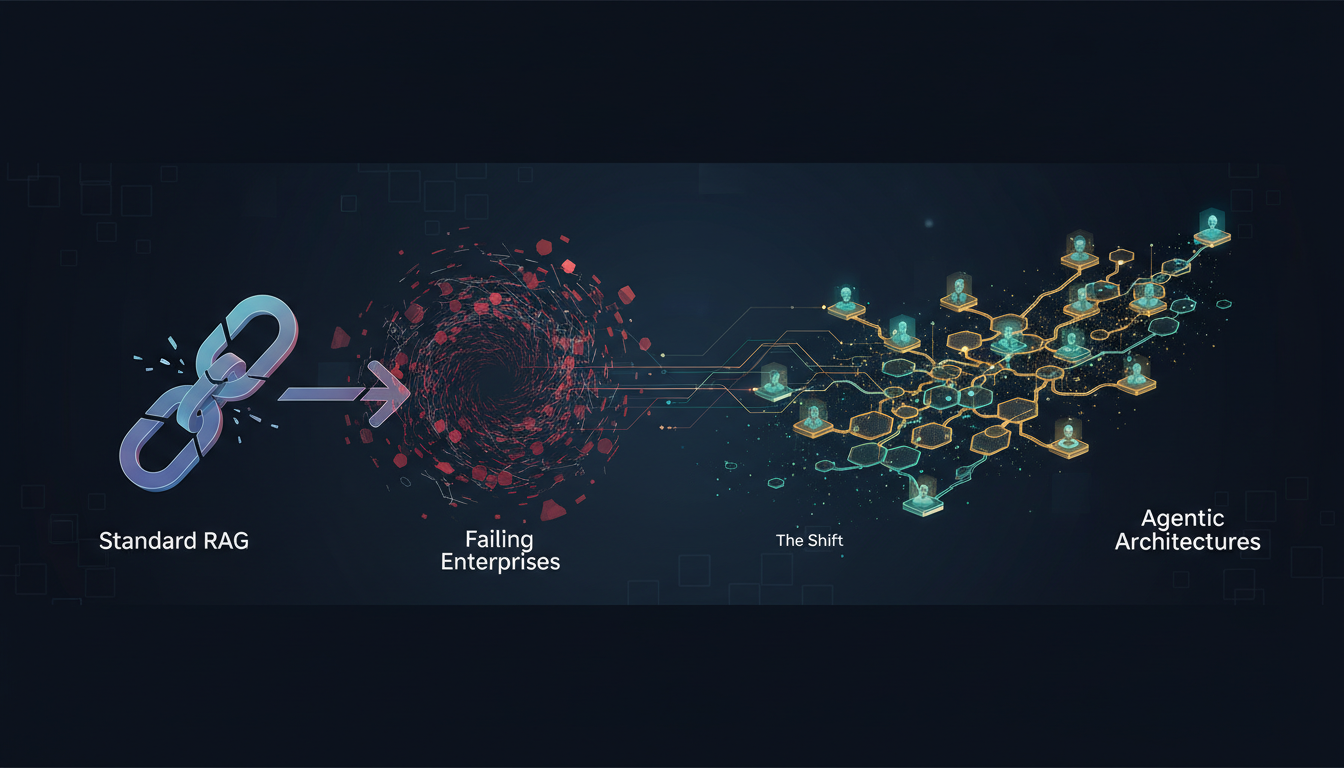

As enterprises increasingly rely on AI to streamline operations and enhance decision-making, they often turn to Retrieval-Augmented Generation (RAG) for knowledge management. However, the traditional RAG architecture, which combines simple vector search with a Large Language Model (LLM), is proving inadequate for the complexities of enterprise environments. This article explores the critical shortcomings of standard RAG systems and highlights why the shift to agentic architectures is essential for future-proofing enterprise AI initiatives.

The Fundamental Architecture Problem

The conventional RAG architecture is characterized by a linear pipeline: documents are embedded into vectors, stored in a database, retrieved when queried, and then passed to an LLM for response generation. Despite its simplicity, this model struggles with complex enterprise queries because it operates in a disjointed manner. The retrieval process lacks context, and the generation phase has no insight into how or why the retrieved documents were selected. This separation results in a system that cannot self-assess or adapt when retrievals fall short.

Take for instance a legal department querying indemnification obligations under a specific contract clause. A standard RAG might fetch sections mentioning relevant terms but will fail to synthesize across clauses, recognize jurisdictional nuances, or flag content requiring human judgment. The root of this failure lies in the rigid separation of retrieval and reasoning, a structural flaw that no amount of parameter tuning can resolve.

The Context Collapse Crisis

Enterprise information is dynamic, interconnected, and context-dependent, traits poorly handled by standard RAG systems. This leads to a phenomenon researchers call "context collapse." Temporal context, such as the recency of policy updates, is often ignored unless manually accounted for, which most systems do not accommodate. Similarly, organizational context can vary drastically across departments, yet vector embeddings treat all instances uniformly. Conversational context suffers too, as systems handle each query independently, failing to link follow-up questions to previous responses.

These deficiencies are particularly problematic in industries like finance, healthcare, and law, where understanding the evolution of regulations, synthesizing patient data, or analyzing legal precedents is crucial. The inability of standard RAG systems to reliably handle these scenarios contributes to the reported 40-60% failure rate in enterprise deployments.

The Evaluation Trap

A significant issue with current RAG implementations is the focus on the wrong evaluation metrics. Standard assessments prioritize retrieval precision, recall, and latency, which measure system speed and document relevance but not the accuracy or utility of the generated answers. A system might score high on benchmarks yet fail spectacularly in real-world applications where nuanced understanding is required, such as medical diagnosis or legal interpretation.

Databricks’ KARL agent introduces a solution by using reinforcement learning to optimize for complete task outcomes rather than intermediary retrieval metrics. This approach ensures that the system learns which strategies lead to correct and useful responses, marking a shift from component performance to holistic success.

The Agentic Alternative

Agentic RAG architectures overcome these limitations by incorporating autonomous planning, iterative refinement, and flexible tool use. Autonomous planning turns retrieval into a strategic process where the system identifies the necessary information, devises a retrieval plan, and adjusts when initial attempts are insufficient. Iterative refinement allows for continuous improvement of responses, with the system evaluating its outputs and retrieving additional data as needed. Tool-use flexibility lets the system draw from diverse data sources and strategies, even involving human input for complex queries.

Databricks’ KARL exemplifies this architecture by adapting retrieval methods based on query types and leveraging reinforcement learning to generalize across different enterprise search behaviors. This results in a system capable of not just retrieving documents but reasoning about what information is needed and how best to obtain it.

What This Means for Your RAG Implementation

Transitioning from standard to agentic RAG is not optional; it is a necessity driven by the evolving demands of enterprise knowledge management. Organizations must reassess their current systems by asking three critical questions: Does the system handle complex reasoning? Can it adapt when initial results are inadequate? Are evaluations based on real-world outcomes rather than academic benchmarks?

If these questions uncover gaps, the path forward involves adopting agentic frameworks, redefining evaluation metrics to focus on end-to-end task completion, and building infrastructure that supports dynamic retrieval strategies. While the investment is substantial, the cost of inaction—persistent failures, unreliable outputs, and unmet AI potential—is far greater.

The evolution of RAG from simple retrieval to agentic architectures reflects the changing landscape of enterprise knowledge systems. As the complexity of enterprise demands grows, so too must the sophistication of the tools we use to manage them. Organizations that embrace this shift will be better positioned to leverage AI effectively, ensuring their systems deliver on their promises and meet the intricate needs of the modern enterprise.